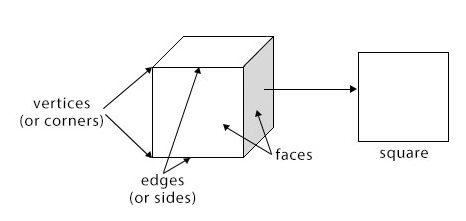

Right: A ConvNet arranges its neurons in three dimensions (width, height, depth), as visualized in one of the layers. Moreover, the final output layer would for CIFAR-10 have dimensions 1x1x10, because by the end of the ConvNet architecture we will reduce the full image into a single vector of class scores, arranged along the depth dimension.

As we will soon see, the neurons in a layer will only be connected to a small region of the layer before it, instead of all of the neurons in a fully-connected manner. (Note that the word depth here refers to the third dimension of an activation volume, not to the depth of a full Neural Network, which can refer to the total number of layers in a network.) For example, the input images in CIFAR-10 are an input volume of activations, and the volume has dimensions 32x32x3 (width, height, depth respectively).

In particular, unlike a regular Neural Network, the layers of a ConvNet have neurons arranged in 3 dimensions: width, height, depth. Convolutional Neural Networks take advantage of the fact that the input consists of images and they constrain the architecture in a more sensible way. Moreover, we would almost certainly want to have several such neurons, so the parameters would add up quickly! Clearly, this full connectivity is wasteful and the huge number of parameters would quickly lead to overfitting.ģD volumes of neurons. For example, an image of more respectable size, e.g. This amount still seems manageable, but clearly this fully-connected structure does not scale to larger images. In CIFAR-10, images are only of size 32x32x3 (32 wide, 32 high, 3 color channels), so a single fully-connected neuron in a first hidden layer of a regular Neural Network would have 32*32*3 = 3072 weights. Regular Neural Nets don’t scale well to full images. The last fully-connected layer is called the “output layer” and in classification settings it represents the class scores.

Each hidden layer is made up of a set of neurons, where each neuron is fully connected to all neurons in the previous layer, and where neurons in a single layer function completely independently and do not share any connections. As we saw in the previous chapter, Neural Networks receive an input (a single vector), and transform it through a series of hidden layers. These then make the forward function more efficient to implement and vastly reduce the amount of parameters in the network. So what changes? ConvNet architectures make the explicit assumption that the inputs are images, which allows us to encode certain properties into the architecture. SVM/Softmax) on the last (fully-connected) layer and all the tips/tricks we developed for learning regular Neural Networks still apply. And they still have a loss function (e.g. The whole network still expresses a single differentiable score function: from the raw image pixels on one end to class scores at the other. Each neuron receives some inputs, performs a dot product and optionally follows it with a non-linearity. Case Studies (LeNet / AlexNet / ZFNet / GoogLeNet / VGGNet)Ĭonvolutional Neural Networks (CNNs / ConvNets)Ĭonvolutional Neural Networks are very similar to ordinary Neural Networks from the previous chapter: they are made up of neurons that have learnable weights and biases.Converting Fully-Connected Layers to Convolutional Layers.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed